An artist’s performance of the photoacoustic air-borne finder system running from a drone to sense and image undersea things. Credit: Kindea Labs

The “Photoacoustic Airborne Sonar System” might be set up below drones to make it possible for aerial undersea studies and high-resolution mapping of the deep ocean.

Stanford University engineers have actually established an air-borne technique for imaging undersea things by integrating light and sound to break through the relatively blockaded barrier at the user interface of air and water.

The scientists picture their hybrid optical-acoustic system one day being utilized to perform drone-based biological marine studies from the air, perform massive aerial searches of sunken ships and aircrafts, and map the ocean depths with a comparable speed and level of information as Earth’s landscapes. Their “Photoacoustic Airborne Sonar System” is detailed in a current research study released in the journal IEEE Access.

“Airborne and spaceborne radar and laser-based, or LIDAR, systems have been able to map Earth’s landscapes for decades. Radar signals are even able to penetrate cloud coverage and canopy coverage. However, seawater is much too absorptive for imaging into the water,” stated research study leader Amin Arbabian, an associate teacher of electrical engineering in Stanford’s School of Engineering. “Our goal is to develop a more robust system which can image even through murky water.”

Energy loss

Oceans cover about 70 percent of the Earth’s surface area, yet just a little portion of their depths have actually gone through high-resolution imaging and mapping.

The primary barrier relates to physics: Sound waves, for instance, cannot pass from air into water or vice versa without losing most – more than 99.9 percent – of their energy through reflection versus the other medium. A system that attempts to see undersea utilizing soundwaves taking a trip from air into water and back into air undergoes this energy loss two times – leading to a 99.9999 percent energy decrease.

Similarly, electro-magnetic radiation – an umbrella term that consists of light, microwave and radar signals – likewise loses energy when passing from one physical medium into another, although the system is various than for noise. “Light likewise loses some energy from reflection, however the bulk of the energy loss is because of absorption by the water,” described research study very first author Aidan Fitzpatrick, a Stanford college student in electrical engineering. Incidentally, this absorption is likewise the reason that sunshine can’t permeate to the ocean depth and why your mobile phone – which depends on cellular signals, a kind of electro-magnetic radiation – can’t get calls undersea.

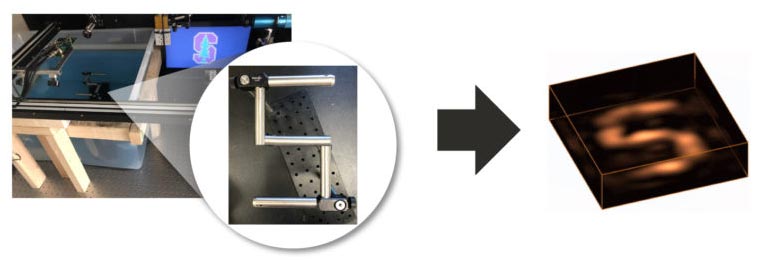

The speculative Photoacoustic Airborne Sonar System setup in the laboratory (left). A Stanford “S” immersed below the water (middle) is rebuilded in 3D utilizing shown ultrasound waves (right). Credit: Aidan Fitzpatrick

The outcome of all of this is that oceans can’t be mapped from the air and from area in the very same method that the land can. To date, the majority of undersea mapping has actually been accomplished by connecting finder systems to ships that trawl a provided area of interest. But this method is sluggish and expensive, and ineffective for covering big locations.

An undetectable jigsaw puzzle

Enter the Photoacoustic Airborne Sonar System (PASS), which integrates light and noise to break through the air-water user interface. The concept for it originated from another job that utilized microwaves to carry out “non-contact” imaging and characterization of underground plant roots. Some of PASS’s instruments were at first developed for that function in cooperation with the laboratory of Stanford electrical engineering teacher Butrus Khuri-Yakub.

At its heart, PASS plays to the specific strengths of light and noise. “If we can use light in the air, where light travels well, and sound in the water, where sound travels well, we can get the best of both worlds,” Fitzpatrick stated.

To do this, the system very first fires a laser from the air that gets soaked up at the water surface area. When the laser is soaked up, it creates ultrasound waves that propagate down through the water column and show off undersea things prior to racing back towards the surface area.

The returning acoustic waves are still sapped of the majority of their energy when they breach the water surface area, however by creating the acoustic waves undersea with lasers, the scientists can avoid the energy loss from occurring two times.

“We have developed a system that is sensitive enough to compensate for a loss of this magnitude and still allow for signal detection and imaging,” Arbabian stated.

An animation revealing the 3D picture of the immersed item recreated utilizing shown ultrasound waves. Credit: Aidan Fitzpatrick

The showed ultrasound waves are taped by instruments called transducers. Software is then utilized to piece the acoustic signals back together like an unnoticeable jigsaw puzzle and rebuild a three-dimensional picture of the immersed function or item.

“Similar to how light refracts or ‘bends’ when it passes through water or any medium denser than air, ultrasound also refracts,” Arbabian described. “Our image reconstruction algorithms correct for this bending that occurs when the ultrasound waves pass from the water into the air.”

Drone ocean studies

Conventional finder systems can permeate to depths of hundreds to countless meters, and the scientists anticipate their system will become able to reach comparable depths.

To date, PASS has actually just been evaluated in the laboratory in a container the size of a big aquarium. “Current experiments use static water but we are currently working toward dealing with water waves,” Fitzpatrick stated. “This is a challenging but we think feasible problem.”

The next action, the scientists state, will be to perform tests in a bigger setting and, ultimately, an open-water environment.

“Our vision for this technology is on-board a helicopter or drone,” Fitzpatrick stated. “We expect the system to be able to fly at tens of meters above the water.”

Reference: “An Airborne Sonar System for Underwater Remote Sensing and Imaging” by Aidan Fitzpatrick, Ajay Singhvi and Amin Arbabian, 16 October 2020, IEEE Explore.

DOI: 10.1109/GAIN ACCESS TO.2020.3031808